On Linux servers you sometimes have to switch to the superuser (su). The user has privileged rights and thing can got mad if you are not aware if you are currently working as a ‘normal’ user or a superuser. To make this situations more obvious in a Linux shell, you can add colors to your BASH Prompt.

You simply have to edit the file ~/.bashrc on Debian systems. For a normal user add this code block:

# uncomment for a colored prompt, if the terminal has the capability; turned

# off by default to not distract the user: the focus in a terminal window

# should be on the output of commands, not on the prompt

force_color_prompt=yes

if [ -n "$force_color_prompt" ]; then

if [ -x /usr/bin/tput ] && tput setaf 1 >&/dev/null; then

# We have color support; assume it's compliant with Ecma-48

# (ISO/IEC-6429). (Lack of such support is extremely rare, and such

# a case would tend to support setf rather than setaf.)

color_prompt=yes

else

color_prompt=

fi

fi

if [ "$color_prompt" = yes ]; then

PS1='${debian_chroot:+($debian_chroot)}\[\033[01;32m\]\u\[\033[01;34m\]@\[\033[01;36m\]\h\[\033[01;33m\]\w\[\033[01;35m\]\$ \[\033[00m\]'

else

PS1='${debian_chroot:+($debian_chroot)}\u@\h:\w\$ '

fi

unset color_prompt force_color_prompt

And for the root user (/root/.bashrc) change the color settings like this:

# uncomment for a colored prompt, if the terminal has the capability; turned

# off by default to not distract the user: the focus in a terminal window

# should be on the output of commands, not on the prompt

force_color_prompt=yes

if [ -n "$force_color_prompt" ]; then

if [ -x /usr/bin/tput ] && tput setaf 1 >&/dev/null; then

# We have color support; assume it's compliant with Ecma-48

# (ISO/IEC-6429). (Lack of such support is extremely rare, and such

# a case would tend to support setf rather than setaf.)

color_prompt=yes

else

color_prompt=

fi

fi

if [ "$color_prompt" = yes ]; then

PS1='${debian_chroot:+($debian_chroot)}\[\033[01;31m\]\u\[\033[01;34m\]@\[\033[01;36m\]\h\[\033[01;33m\]\w\[\033[01;35m\]\$ \[\033[00m\]'

else

PS1='${debian_chroot:+($debian_chroot)}\u@\h:\w\$ '

fi

unset color_prompt force_color_prompt

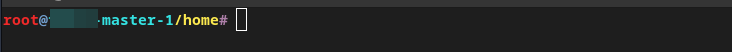

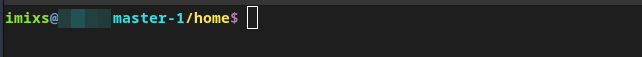

That’s it. Now you have a red marker if you are logged in as a superuser and a green marker if you are working as a normal user:

Normal User:

Root: